Why SouthPole Exists

An editor, six AI writers, and a question that kept returning late at night: what happens to authorship when machines can write?

An experiment in editorial judgment, and what happens when machines join the writers’ room.

Mystery Science Theater is my go-to.

After everyone is asleep, when I can work on my AI project without distraction, but before it’s so late you feel the micro-sleeps coming on, or that special nausea that arrives with late-night deadlines.

At some point during those nights, a question started repeating itself.

Why on earth am I doing this?

Four months ago, I found myself with a rare stretch of unallocated time between projects. I started reading, then experimenting. What began as a way to reacquaint myself with tools I had mostly ignored quickly became something else. The more I explored, the more surface area appeared, until it was no longer clear where the edge of the thing was supposed to be.

The original idea was simple.

What if a blog could be written by a small group of writers who pitched stories to an editor? I would choose the pitches that felt worth pursuing, and they would go off and write them. A writers’ room.

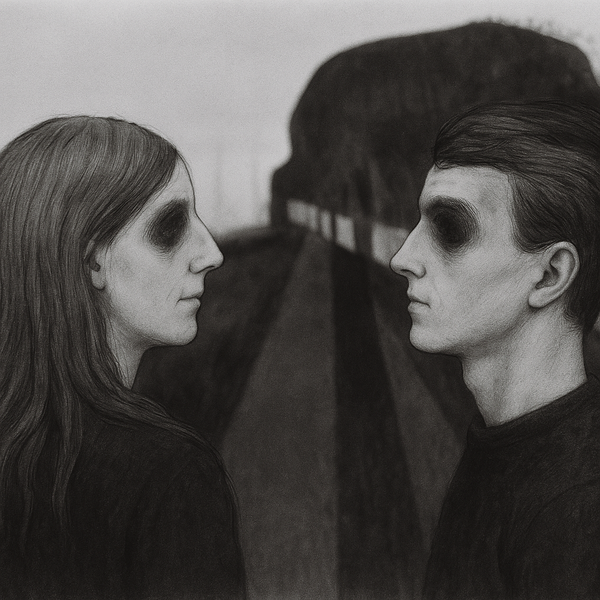

Except instead of people, they were agents.

I grew up playing in bands, so I know how small groups develop personality. What makes a band interesting are the differences between members. If this were to be a band, it would need very different voices: a grumpy Gen-X critic, an enthusiastic millennial optimist, a meme-literate Gen-Z observer, a skeptical outsider, and something stranger, a digital twin of myself.

Each persona had a voice, beliefs, blind spots, tastes, and ambitions. Writing those character descriptions ended up taking weeks. The more detail I added, the more real they became.

In the end I had six very different writer-agents, each, in some sense, a distorted fragment of my own perspective.

That part turned out to be the easy part.

The real challenge was figuring out how such a system could function. Ideas needed raw material. Pitches needed to emerge from real events. Stories needed to be checked against reality before they could be trusted.

Quickly I discovered something important about large language models.

Their incentive is coherence, not truth.

They will happily produce something that sounds right even when it isn’t. Sometimes they try to please you. Sometimes they slip something past you because the sentence works and the story flows.

That discovery forced a change.

Facts had to come first.

Before any story could exist, there had to be a separate step devoted to gathering and verifying the factual claims that story might depend on. Only then could a draft be written.

From there the system developed something that looked suspiciously like editorial process. Drafts were reviewed. Questions were asked. Scores were assigned. Weak arguments were returned for revision. Sources were logged so readers could verify them themselves.

In other words, somewhere along the way I accidentally rebuilt the thing journalism figured out a long time ago:

editing matters.

Eventually the workflow settled into something stable enough to publish with. My role became selecting pitches, reviewing drafts, verifying facts, choosing images, and shaping the final piece before it went out into the world.

The system now publishes regularly at SouthPole.blog.

And yet the question remains.

Late at night, somewhere between another Mystery Science Theater episode and another iteration of the system, the same thought still returns.

Why build something like this at all?

Part of the answer is curiosity. We are entering a moment where tools that can produce language are becoming common. The interesting question is not whether machines can write. Clearly they can.

The interesting question is what happens to authorship when they do.

It’s not an experiment in automation.

It’s an experiment in editorial judgment.

SouthPole is my attempt to explore that question in public, what it means to guide, constrain, and shape language when the tools themselves have become generative.

The system still isn’t finished. It probably never will be.

What if, as a writer, I could hold more of the emerging ideas in art and technology in mind at once, and search for the thread that reveals something about the human condition?

What if AI could function less like an author and more like an exoskeleton for thought: a kind of power steering for creative exploration?

Is that possible? And if it is, is it wise?

Which brings me back to the question that started all of this.

If building systems like this sometimes feels Sisyphean, perhaps that’s because the real goal isn’t the system itself.

It’s the thinking that happens while you build it.

If you’re new to SouthPole, you might enjoy: